Imagine I have 2 data points one being 0.5 and one being -0.5 and my model predicts 0 for both. This can be best explained with a simple example : Yₚ : is the predicted value for iᵗʰ pointĮrror = yᵢ - yₚ Now, why the square and square root? What loss function does the Model Optimize? This is how our model tries to predict the best hyper plane in an intuitive way.

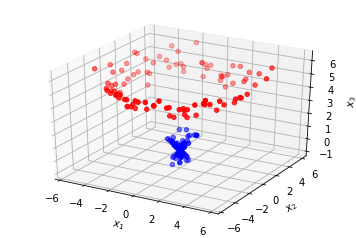

Now if we take the Ellipse as an estimation of our data spread, which hyper plane passes through majority of the Ellipse, It is ∏3, and hence it will be best among the rest. If we see ∏1 it covers some of the data but a majority of our points are far away from the hyper plane which will result into high value of loss.įor ∏2, the plane well divides the data, it is better than ∏1 but still there is a scope of improvement.įor ∏3, if we see this can be considered as the best plane which covers all the points, and consist a majority portion of the data points. Let’s understand this using simple geometry.Īs you can see I have drawn a green boundary along all the points in the plane, now with normal intuition we can say which hyper plane best fits our data. How do we minimize the error?īy choosing the best hyper plane. Now as the name suggest we have to optimize something, in this case we have to minimize the error as mentioned above. It uses something called as a Optimization equation to find the best hyper plane which fits our data. Well for that you need to reference my previous blog mentioned above. the distance of the point from the plane. Now, you will get a clear picture of what’s happening, imagine the model determines ∏ to be the best Hyper Plane, that estimates the best possible data with minimum error, now, not all points if u see are on the hyper plane, so the perpendicular distance from the plane to the point is considered as error, now, from LR, we know that eventually everything boils down to optimization problem, where we have to optimize something, or let’s say we have to maximize or minimize an entity, here we minimize the error i.e.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed